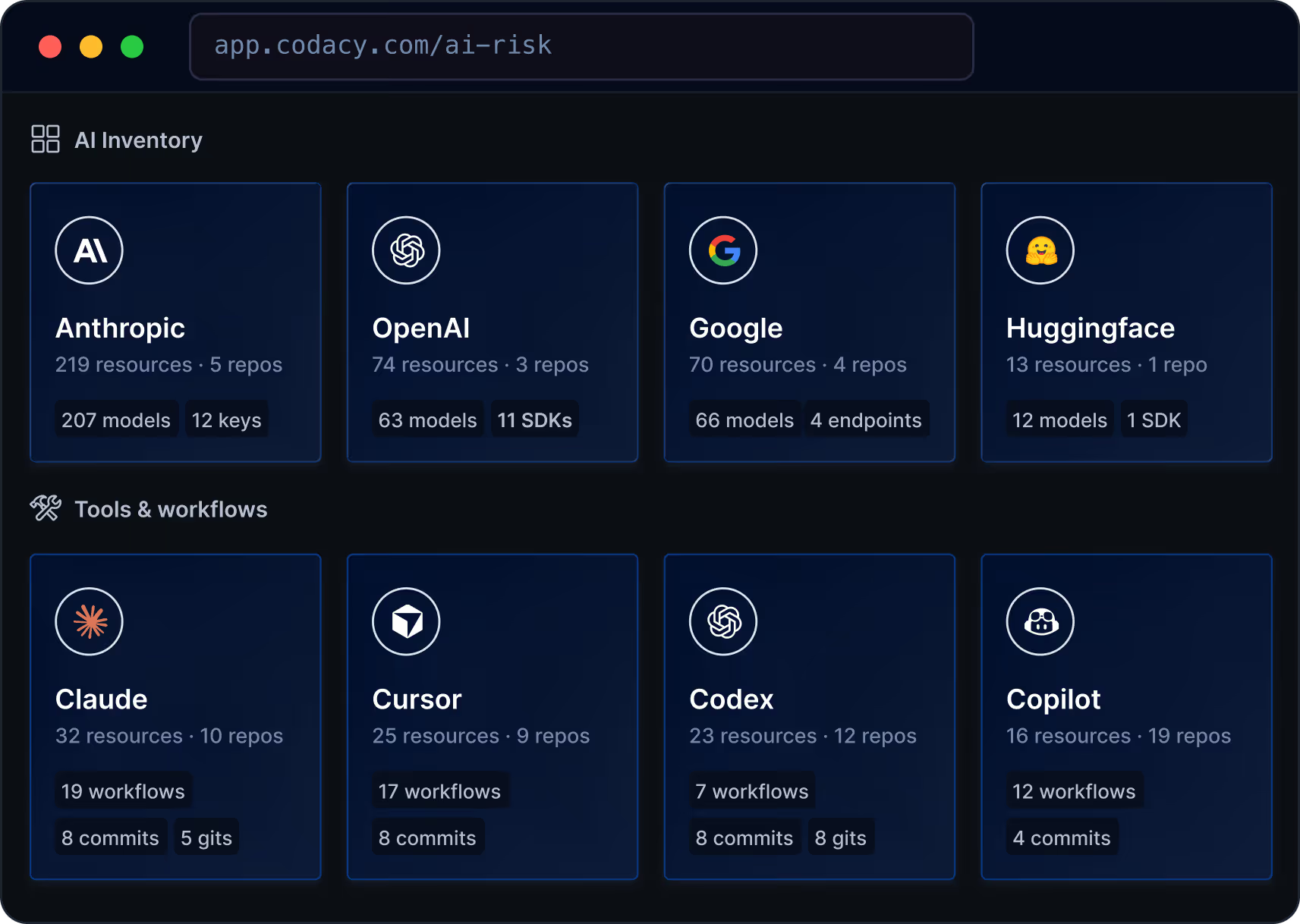

See your AI Inventory, instantly.

Track every AI model, MCP Server and coding tool used across your codebase. Get continuous, audit-ready AI Inventory reports and stay ahead of the regulatory compliance curve.

See AI Inventory in action

The evidence is in your repos

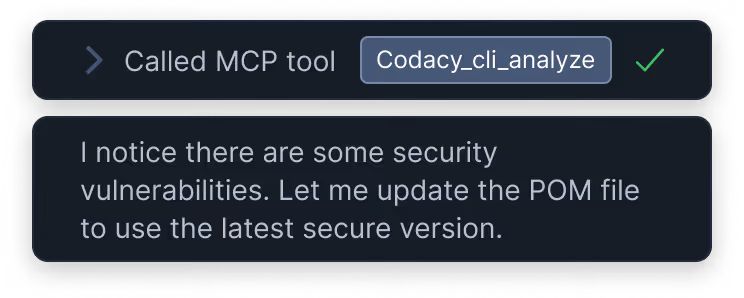

No agents. No plugins. No manual triggers.

2. AI Inventory scans committed artifacts

Config files, dependency manifests, commit co-author metadata, and environment variable references. Detection runs automatically on every commit.

Review

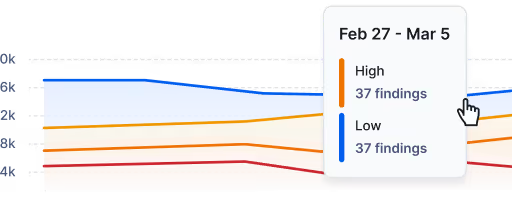

- Secret scanning

- Insecure dependencies (SCA)

- AI policy violations

- SQL Injections

- SAST

- Unapproved model calls

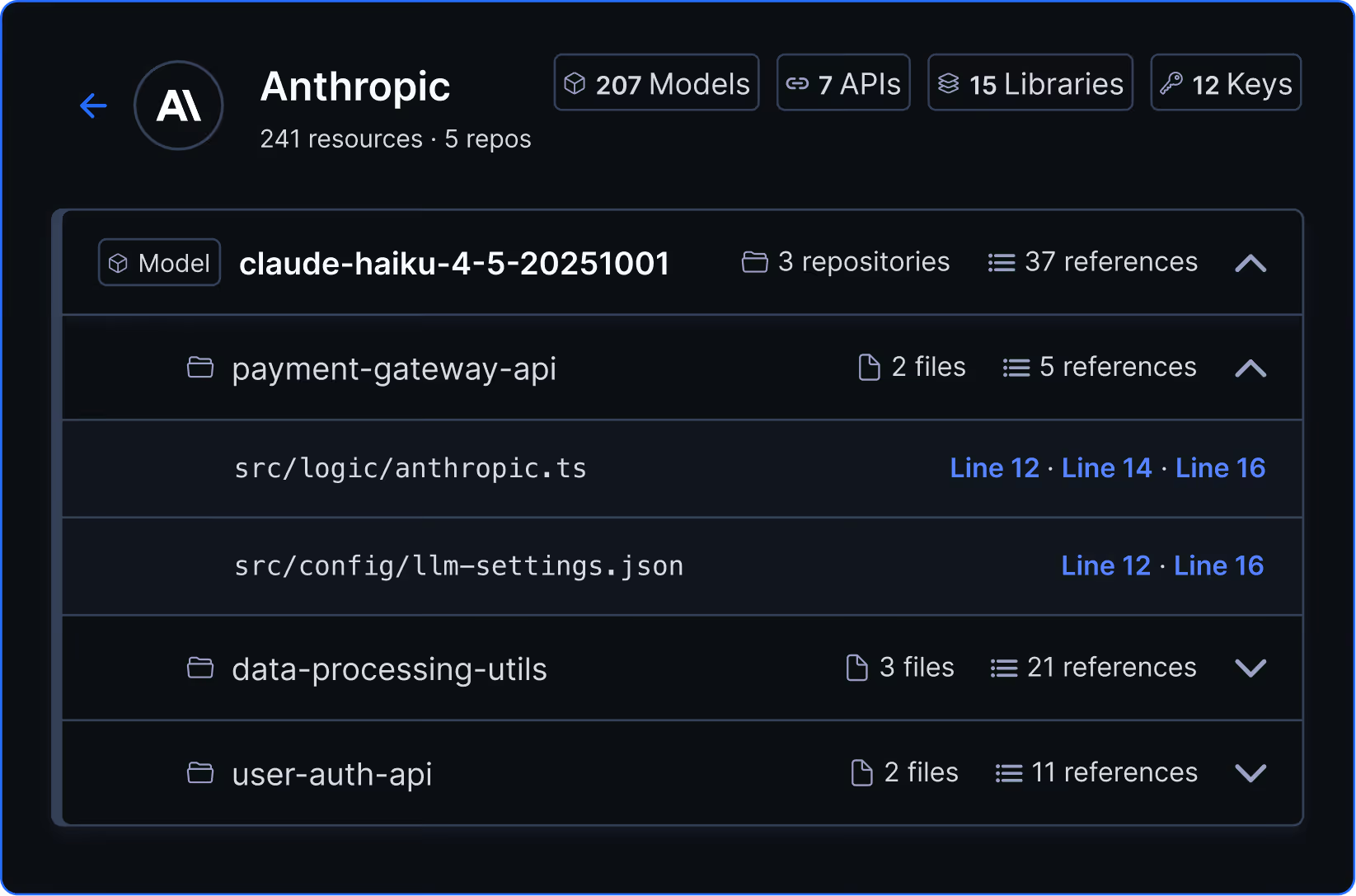

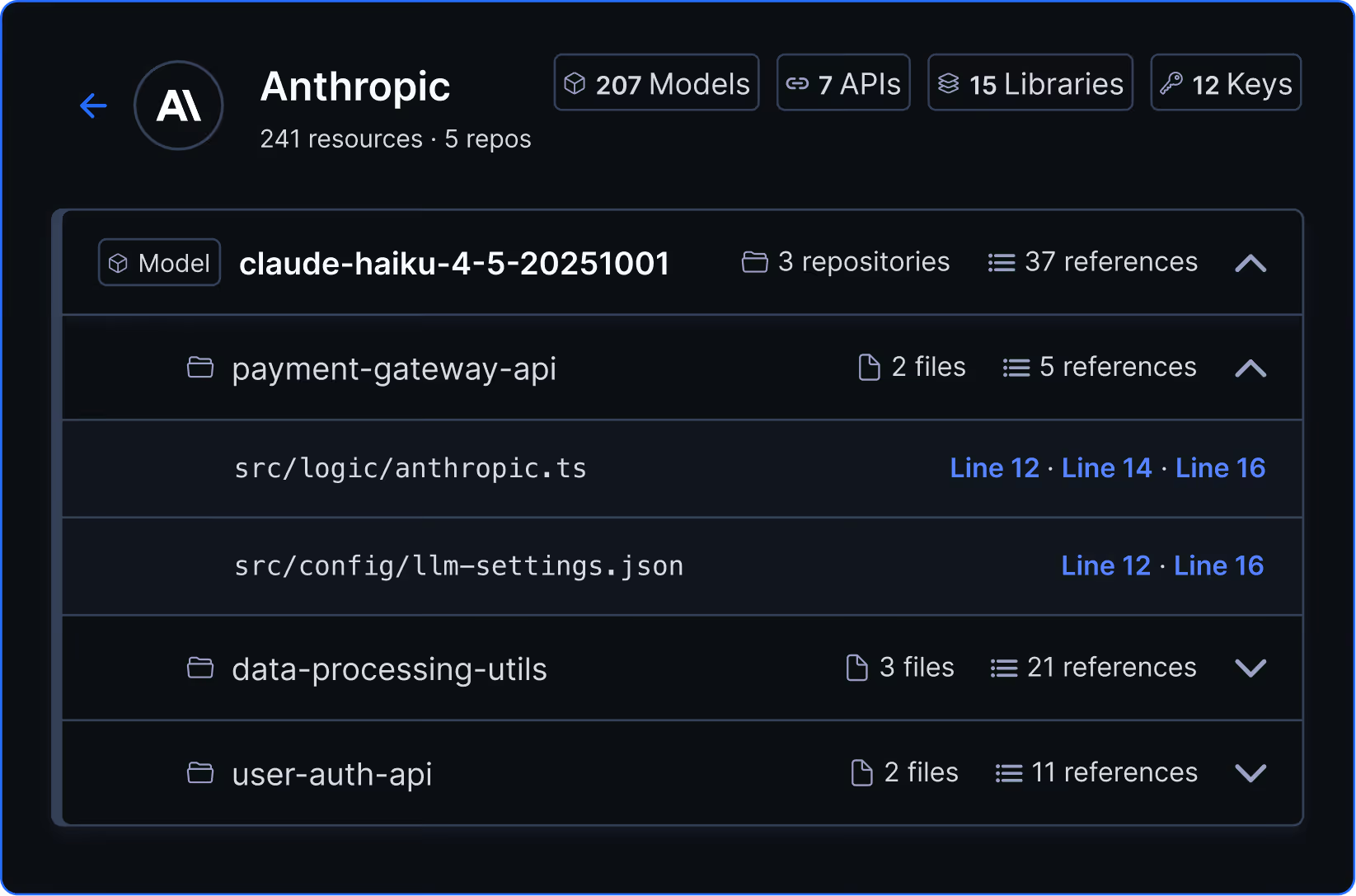

Every AI model and dev tool living in your code. Tracked continously.

AI Inventory detects AI usage that leaves traces in your source code, file structure, and git history. All artifact types, across every connected repo.

API keys and endpoints to AI providers

Environment variable references and keys pointing to OpenAI, Anthropic, and other AI model provider services.

Review

- Secret scanning

- Insecure dependencies (SCA)

- AI policy violations

- SQL Injections

- SAST

- Unapproved model calls

AI coding tool configs

Find tool-specific config files committed to your repos, such as.cursorrules, CLAUDE.md, and other AI tool settings that show exactly what's configured and where.

Review

- Secret scanning

- Insecure dependencies (SCA)

- AI policy violations

- SQL Injections

- SAST

- Unapproved model calls

MCP server definitions

Detect which MCP servers are defined, what tools they expose, and which repos have them, including servers your team may not have formally approved.

Review

- Secret scanning

- Insecure dependencies (SCA)

- AI policy violations

- SQL Injections

- SAST

- Unapproved model calls

Audit-ready visibility

Full inventory exports for audits and compliance. Export your AI tool inventory as a structured report for board presentations, audit preparation, or compliance documentation.

Review

- Secret scanning

- Insecure dependencies (SCA)

- AI policy violations

- SQL Injections

- SAST

- Unapproved model calls

Audit readiness starts with knowing what AI is in your code.

AI Inventory is the first step toward audit-ready AI governance. Know what AI is in your code before your auditors ask.

The EU AI Act requires organisations deploying or developing high-risk AI systems to demonstrate risk management practices (Art. 9) and accuracy measures (Art. 15). For engineering organisations, this starts with an accurate inventory of AI systems and components in your codebase.

AI Inventory produces that inventory from source code artifacts, giving you an evidence base for the documentation obligations ahead.

ISO 42001 provides a framework for establishing an AI management system. A key requirement is maintaining an inventory of AI assets and understanding how AI is used within your organisation’s processes.

AI Inventory identifies AI models, libraries, API keys, and coding tools across your repositories, providing the asset visibility that ISO 42001 implementation requires.

DORA Articles 28–44 require financial institutions to maintain a Register of Information covering ICT third-party risk. AI coding tools, including model API integrations and agent frameworks, now fall within scope.

AI Inventory detects these integrations from source code artifacts across your repos, supporting the third-party ICT risk documentation that DORA requires.

Request information

Frequently asked questions

AI Inventory detects AI models, API keys and endpoints, SDKs, tools and workflows across every repository connected to your Codacy organization. This includes: model references from Anthropic, OpenAI, and Google; AI SDKs, libraries, and packages across Python, JavaScript, Go, Ruby, Rust, Java, and .NET, 35+ Python and 18+ JavaScript packages; And 28+ AI coding tools, including Cursor, Claude Code, GitHub Copilot, Cline, Windsurf, and more.

AI Inventory doesn't detect usage that does not leave traces in your repositories. Inline autocomplete tools (Copilot tab completions, Tabnine, Codeium) and copy-paste from ChatGPT or Claude web leave no repository traces.

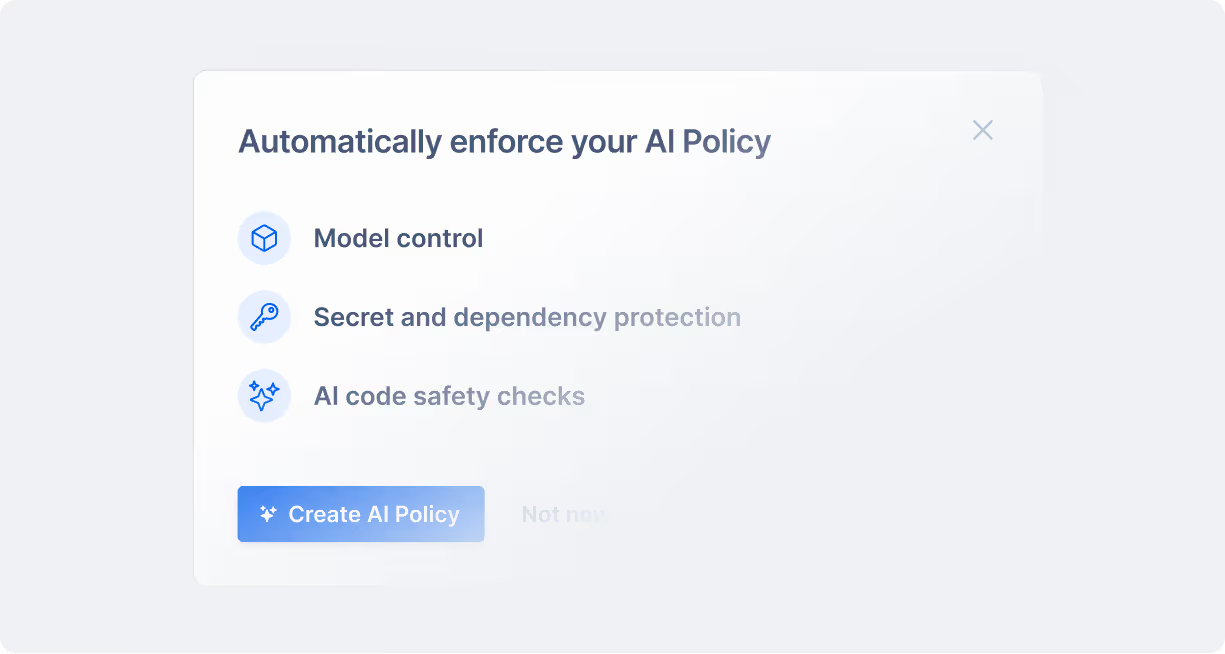

Not in the initial release. AI Inventory is a visibility tool. It does not block merges, enforce allow-lists, or gate deployments based on findings. Policy enforcement and merge gates based on AI Inventory findings are being investigated for a future release. If you need to act on what you find, the current workflow is to use the inventory view to inform standardization and procurement decisions.

AI Inventory is generally available on the Codacy Business plan, and as a temporary preview for Team plan customers until May 18, 2026. It is also included the free 14-day trial, so connecting your repos during a trial will surface your full AI inventory after the first scan.

No. AI Inventory uses pattern matching and config file parsing, not machine learning or LLMs. It reads what is committed to your repositories and identifies known AI artifacts based on defined patterns. This distinction matters if your security team is cautious about AI in the toolchain: there is no model making inferences about your code.

AI Inventory does not make you compliant by itself, but it gives you the evidence base that compliance requires.

The EU AI Act's high-risk obligations take effect August 2, 2026, and require organizations to demonstrate risk management practices for AI systems — which starts with knowing what AI is present in your codebase. AI Inventory provides a continuously updated, source-code-level record of every AI model, library, API key, and coding tool across your repositories. That's the foundation any audit or documentation requirement builds on.

For EU financial institutions, DORA already requires a maintained Register of Information covering ICT third-party risk — a category that now includes AI coding tools and model API integrations. AI Inventory surfaces exactly the artifacts that register needs to reflect: which tools are in use, across which repositories, and with what configurations.

In both cases, the alternative is relying on developer surveys that are incomplete the moment someone installs a new tool. AI Inventory gives compliance and security teams a defensible, continuously updated view drawn directly from source code.

Future releases will add exportable AI Bills of Materials (AI-BOM) for audit-ready documentation. In the initial release, the value is visibility.